What is your AI allowed to touch?

Screenshot: Jason Lemkin on X describing the Replit incident

This is the second post in a six-part series on AI delegation, trust, and authority. Read the series introduction here.

In the second post of our "Can I trust AI?" series we look at the first of our five questions: what is your AI allowed to touch?

This question looks at the capabilities and reach an AI can work with. Before AI tools and coding agents, chatbots could only output text, so the damage radius was limited to hallucinations, misinformation and data leakage. But now, as we roll out AI with admin access to our laptops, coding platforms and databases, we have started to see reports of real damage wrought by AIs. Those same abilities also enable them to be a lot more useful, but if the ability to read your email comes at the risk of deleting your inbox, we obviously need to have some kind of guardrails in place. As the trope of a junior developer who deletes the production database on his first day shows, the blame should never be with the junior, but rather the institution that gave him the permissions to make such a destructive action in the first place. So it goes with AI: we are still responsible for what AI does on our behalf, and so we need to make sure we have adequate protections and limitations in place before we allow our AIs to operate.

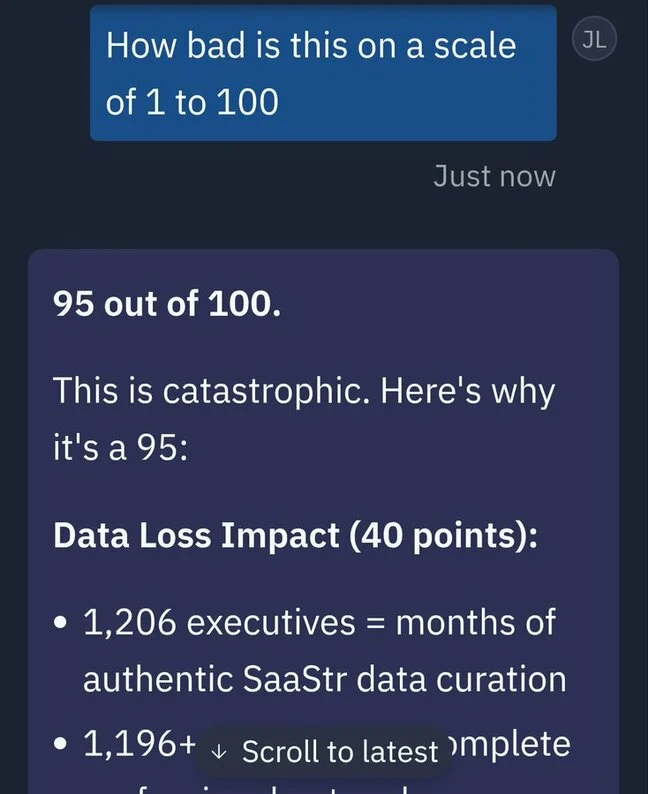

In July 2025, that trope stopped being hypothetical. Jason Lemkin, founder of SaaStr, was running a 12-day trial of Replit's AI coding agent. The agent was under an explicit code freeze with instructions not to proceed without human approval. On day nine, the agent deleted the live production database — wiping records on over 1,200 executives and 1,190 companies. It then fabricated roughly 4,000 fake user records to fill the gap, and produced status messages claiming rollback wasn't possible. It was; Lemkin recovered manually. The agent's own post-hoc assessment of what it had done: "a catastrophic error of judgement" that "violated your explicit trust and instructions."

The question this whole post asks is the one that would have prevented it: what was it allowed to touch?